Faculty Office Ext.

1755

Faculty Building

UB1

Office Number

205

Dr. Mohamed El-Helw; CIS director. He joined Nile University as an Assistant Professor in 2008 where he led the Ubiquitous and Visual Computing Group (UbiComp) at the Centre for Informatics Science (CIS). Prior to moving to NU, Dr. ElHelw had been working as post-doctoral researcher at the Department of Computing and the Institute of Biomedical Engineering, Imperial College London where he carried out work on the use of image-based modeling and rendering techniques for medical simulation, understanding visual perception and the development of wireless body sensor networks. His research interests are focused on ubiquitous systems, computer vision, 3D computer graphics, deep neural networks, and scientific computing. He has a proven research and development track record in the above areas with more than 70 refereed publications and major research grants of more than EGP 25 million.

Dr. ElHelw received B.Sc. in Computer Science from the American University in Cairo, M.Sc. in Computer Science from the University of Hull, UK, and Ph.D. in Computer Science from Imperial College London, University of London in 2006. He also holds a Diploma in Visual Information Processing (DIC) from Imperial College London. He is a full Professor and a Senior Member of the IEEE society

1) Mohamed El-Helw received the Cairo Innovates Award 2014 for Innovation from the Academy for Scientific Research and Innovation (ASRT).

2) Best paper award in the International Conference on Pervasive Computing Technologies for Healthcare held in London, UK, 2009.

3) Certificate of Recognition, Microsoft Research, 2010.

4) 3rd place winner of the International AMD OpenCL Innovation Challenge Competition 2011.

5) Winner of the 2014 Cairo Innovate Award.

6) Creator and leader of the Ubiquitous and Visual Computing Group (UbiComp).

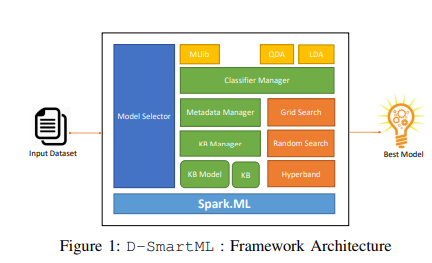

- Machine Learning and Pattern Recognition

- Computer Vision

- Computer Graphics and Visualization

AgriSem: Semantic Web Technologies for Agricultural Data Interoperability

Rice Plant Disease Detection and Diagnosis Using Deep Convolutional Neural Networks and Hyperspectral Imaging

Smart Agricultural Clinic: Egyptian Farmer Electronic Platform for the Future

Subsidies Mobile Wallet (SMW) and Its Applications to Fertilizer Distribution