D-SmartML: A distributed automated machine learning framework

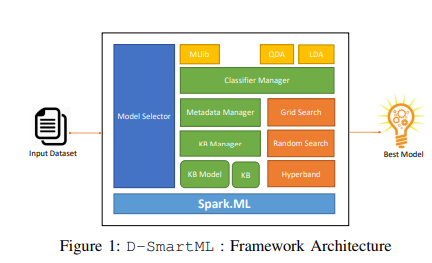

—Nowadays, machine learning is playing a crucial role in harnessing the value of massive data amount currently produced every day. The process of building a high-quality machine learning model is an iterative, complex and time-consuming process that requires solid knowledge about the various machine learning algorithms in addition to having a good experience with effectively tuning their hyper-parameters. With the booming demand for machine learning applications, it has been recognized that the number of knowledgeable data scientists can not scale with the growing data volumes and application needs in our digital world. Therefore, recently, several automated machine learning (AutoML) frameworks have been developed by automating the process of Combined Algorithm Selection and Hyper-parameter tuning (CASH). However, a main limitation of these frameworks is that they have been built on top of centralized machine learning libraries (e.g. scikit-learn) that can only work on a single node and thus they are not scalable to process and handle large data volumes. To tackle this challenge, we demonstrate D-SmartML, a distributed AutoML framework on top of Apache Spark, a distributed data processing framework. Our framework is equipped with a meta learning mechanism for automated algorithm selection and supports three different automated hyper-parameter tuning techniques: distributed grid search, distributed random search and distributed hyperband optimization. We will demonstrate the scalability of our framework on handling large datasets. In addition, we will show how our framework outperforms the-state-of-the-art framework for distributed AutoML optimization, TransmogrifAI. ©2020 IEEE