A Deep Learning-Based Benchmarking Framework for Lane Segmentation in the Complex and Dynamic Road Scenes

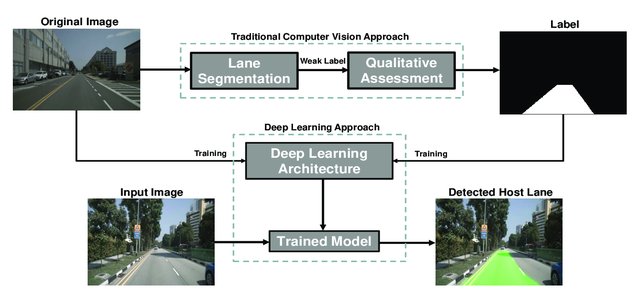

Automatic lane detection is a classical task in autonomous vehicles that traditional computer vision techniques can perform. However, such techniques lack reliability for achieving high accuracy while maintaining adequate time complexity in the context of real-time detection in complex and dynamic road scenes. Deep neural networks have proved their ability to achieve competing accuracy and time complexity while training them on manually labeled data. Yet, the unavailability of segmentation masks for host lanes in harsh road environments hinders fully supervised methods’ operability on such a problem. This work proposes integrating traditional computer vision techniques and deep learning methods to develop a reliable benchmarking framework for lane detection tasks in complex and dynamic road scenes. Firstly, an automatic segmentation algorithm based on a sequence of traditional computer vision techniques has been experimented. This algorithm precisely segments the semantic region of the host lane in the complex urban images of the nuScenes dataset used in this framework; hence corresponding weak labels are generated. After that, the developed data is qualitatively evaluated to be used in training and benchmarking five state-of-the-art FCN-based architectures: SegNet, Modified SegNet, U-Net, ResUNet, and ResUNet++. The performance evaluation of the trained models is done visually and quantitatively by considering lane detection a binary semantic segmentation task. The output results show robust performance, especially ResUNet++, which outperforms all the other models while testing them in different complex road scenes with dynamic scenarios and various lighting conditions. Author